The journey from cached PNG tiles to on-the-fly generated vector tiles

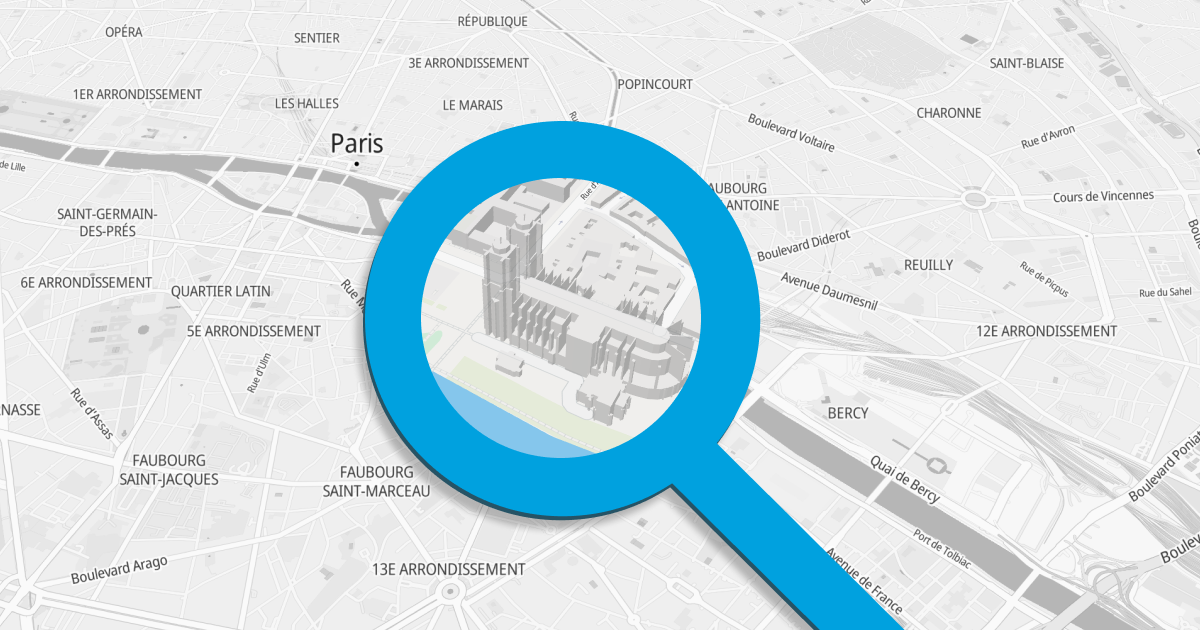

Mireo created its first WEB map in June 2005, roughly 5 months after Google launched their Maps. Google Maps were originally created by brothers Lars and Jens Rasmussen at Where 2 Technologies, and from many aspects, it was a breakthrough in both mapping and WEB programming. Mireo, on the other hand, created the first navigable digital map of Croatia, and once we saw Google Maps, we eagerly wanted to do the same for Croatia (at the first launch, Google Maps were covering USA territory only, using map data from NAVTEQ). Mireo's Croatian maps on the WEB were a huge success, and even today, Mireo is mostly known in Croatia for this map.

Back at that time WEB map was a patchwork of 256x256 PNG tiles pre-rendered on the server-side. Zoom levels were fixed, and each more detailed zoom level doubled the resolution from the previous one. Our first PNG map tiles were generated by Windows GDI API and served by IIS. We used Windows GDI since we've had a map rendering engine written for Windows CE - the most popular OS at that time for GPS car navigation boxes.

Switch to Linux (actually platform-independent) map rendering was enabled by the colossal work of late Maxim Shemenarev and his Anti-Grain Geometry library. AGG is a work of genius. We've learned from Maxim's library how to render anti-aliased roads, how to render complex polygons, the importance of L1 cache locality, and how to do all of these things as fast as possible. The result was astonishing - we've sped up map rendering by almost 10x compared to Windows GDI, and we've made it platform-independent.

A couple of years after we've launched the WEB map of Croatia, we've expanded the map coverage to the whole of Europe. Regardless of the PNG map rendering speedup we've achieved, the size of pre-rendered tiles exploded. The total size of all pre-generated 256x256 map tiles for Europe for all zoom levels was above 600 GB. When Steve Jobs introduced Retina Display with iPhone 4, the headache quadrupled - now we had to generate two sets of tiles, one set with 256x256 tiles and the other one with tiles of size 512x512. European maps' size grew to over 3.2 TB. That's hard to create, hard to transfer, and terribly difficult to update.

With the introduction of vector map tiles and the WebGL standard, things have changed. Vector tiles contain a much more compact geometry description of map objects than fully rendered PNG bitmaps. However, clients (WEB browsers) have to render map geometries on their own, which would be way too slow without a hardware-accelerated graphics engine. WebGL made that possible, and it even enabled attractive new features - map look and feel (style) may be dynamically changed, zoom levels do not need to be fixed anymore, and map can be rotated and tilted freely.

The AGG-like map rendering pipeline we've created earlier allowed us to easily change the output of the map rendering engine from PNG image to vector tile. PNG rendering pipeline looks like this:

fetch data → clip → project → render bitmap → save PNG

while vector tile generation pipeline has the form:

fetch data → clip → project → output to Protobuf

In other words, instead of rendering prepared geometry to PNG, the geometry and accompanying attributes are rendered into Protobuf format (the vector map tile Protobuf format was standardized by Mapbox.) Note that extracting vector tile from map database does not actually render anything; it just extracts map geometries and attributes of a small portion of the map and converts the data to Protobuf format. The GPU acceleration cannot be used here as no rendering is involved, and only the CPU may do the job.

When we started to generate vector files, we took the same approach as with PNG tiles, and we've pre-rendered all vector tiles on the server to be able to quickly fetch tiles directly from the disk upon request. It was already a huge saving - instead of 3.2 TB data for European PNG tiles, we had around 60 GB of vector tiles. That was 53x less space! Still, we wanted more.

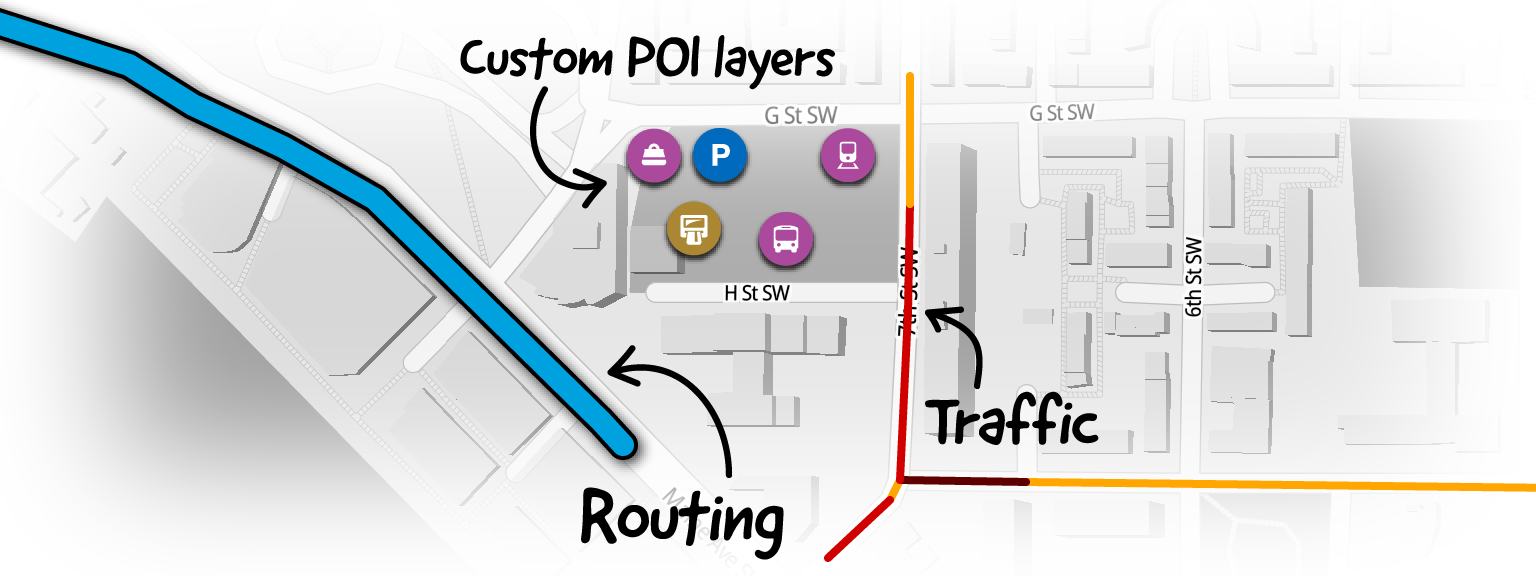

Map data consists of road and polygon geometries, attributes, names, driving rules, signposts, and other stuff. Rendering a map is just one piece of work that the GPS navigation system does with the map data. Searching locations by name and calculating routes on the road network is another set of features that the navigation system has to deal with. Those other things require some unique indexing and routing graph overlay construction to be embedded within the map database. Mireo developed a long time ago a special, compact map data format that contains all the extra information that a GPS navigation system needs to run fast, even on a small ARM machine. As a ballpark figure, the size of maps of all European countries in Mireo format is about 10GB (that includes a large part of Russia and complete Turkey).

We use the same compact map database as the source of data on the server-side for vector tile generation. Now, instead of pre-generating all vector tiles at once and then serving just plain files from the WEB server, we wanted to extract vector tiles directly from our map format, thus reducing the storage size from 60GB to only 10GB in the European case.

And that's precisely where we ended up - by carefully implemented data transformation pipeline which heavily exploits CPU cache locality, shared-nothing architecture, and minimal re-allocation policy - we achieved the performance we wanted. The server creates an average 1024x1024 vector tile in about 4ms from our map data format, and that includes reading data from the disk, cropping map portion, and transforming the data to Protobuf format. Along the way, we've used some very heavy artillery like advanced C++20 features, Eric Niebler's range-v3 C++ library, which fits perfectly into the AGG pipeline, and pretty much everything we know about low-level programming.